AI agents are stateless control loops built around Large Language Models. That’s not an opinion — it’s the architecture. Once an API call ends, the model retains nothing. Every new prompt starts from zero.

This isn’t a bug you can patch away with a better model or a bigger context window. It’s structural. And it’s why memory — implemented as external infrastructure, not model internals — is one of the most important architectural decisions you’ll make when building production agents.

Why Context Windows Aren’t Memory

The obvious workaround is to stuff everything into the context window and keep growing it. This fails in practice for four reasons:

Attention dilution. Transformers don’t prioritize tokens — they give roughly equal weight to everything. A 200k-token window doesn’t mean the model pays close attention to all 200k tokens. It means the relevant signal is competing with everything else.

The “lost in the middle” problem. Information buried in the middle of a long context consistently receives less attention than content at the beginning or end. This is documented, reproducible, and gets worse as context grows.

Linear cost scaling. Quadratic attention mechanisms mean doubling context roughly quadruples compute. For long-running or high-volume agents, this destroys unit economics fast.

Session boundaries. Even a 2M-token window resets when the session ends. Everything disappears. The agent that spent three hours on a task yesterday knows nothing about it today.

RAG helps you ground responses in external facts but doesn’t solve the continuity problem. It retrieves documents, not memories. It has no concept of user history, prior decisions, or accumulated preferences. True agent memory requires a different layer entirely.

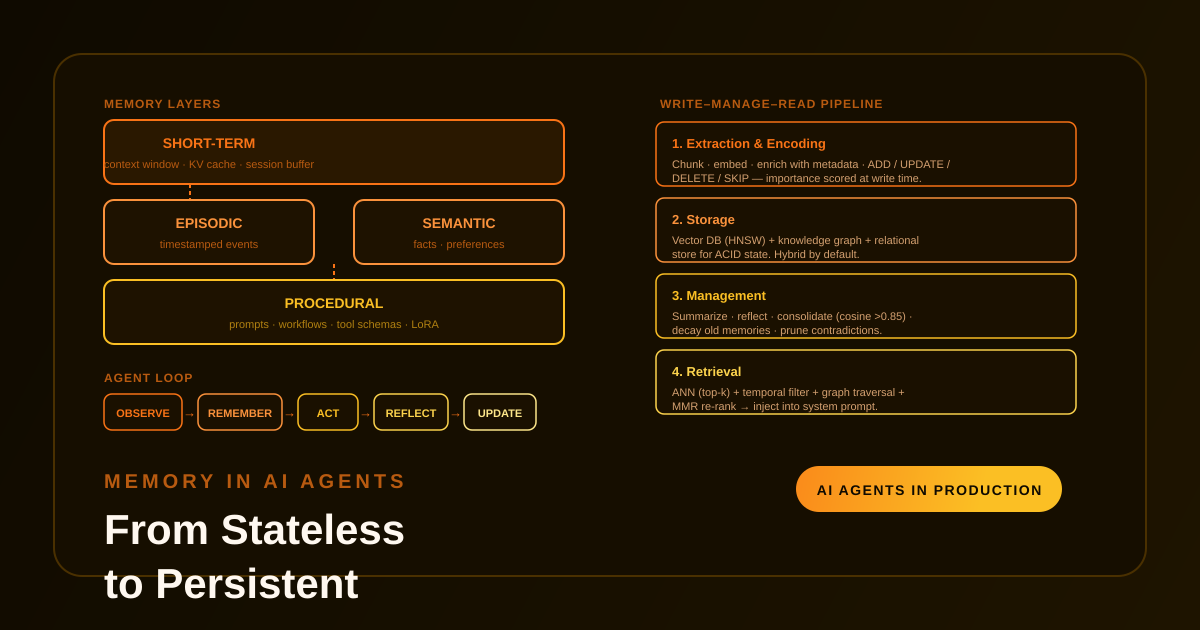

The Four Memory Types

The CoALA cognitive architecture paper (Princeton, 2023) provides the clearest taxonomy for agent memory. Four layers, each with distinct storage and retrieval mechanics:

Short-Term (Working) Memory

The in-context scratch pad. Managed within the LLM’s active context window — recent messages, tool outputs, intermediate reasoning traces, retrieved data from this session.

How it works: Conversation buffers with automatic summarization (typically hierarchical map-reduce) to compress history before it overflows the window. KV caching allows efficient extension without full recomputation.

Limitation: Finite capacity and positional encoding decay. The agent can’t hold a full project history in working memory — it can only hold a compressed, recency-weighted slice.

Example: A deployment agent knows “we’re rolling out to staging first” within the current session. When the session ends, that’s gone unless something persists it.

Episodic Memory

Timestamped, immutable records of specific past events. Think of it as the agent’s diary — what happened, when, and what the outcome was.

Stored as metadata-enriched logs in vector or graph databases, often with temporal edges for sequence reconstruction. An episodic entry might be: “On 2026-04-10, the agent deployed a patch but skipped the database migration step, causing a rollback.”

This layer is what allows an agent to learn from specific failures rather than repeating them.

Semantic Memory

Persistent, generalized knowledge — facts about users, domains, and systems that don’t belong to a specific event. “This user prefers Python over Java.” “Service X fails under load above 500 RPS.” “The staging environment requires VPN.”

Stored as high-dimensional vector embeddings (128–2048 dimensions) for fast similarity search. This is the layer most similar to traditional RAG, but scoped to agent-specific learned knowledge rather than a document corpus.

Procedural Memory

Encoded knowledge of how to do things: system prompts, decision trees, workflow templates, tool definitions, and fine-tuned behaviors. This layer doesn’t retrieve facts — it shapes the agent’s behavior at execution time.

Stored as JSON schemas, prompt templates, or lightweight LoRA adapters loaded dynamically. Changing procedural memory changes how the agent reasons, not just what it knows.

The Write–Manage–Read Pipeline

Memory isn’t a database you append to. Effective implementations follow a four-stage pipeline that treats memory as a living structure — not an archive.

1. Extraction and Encoding (Write)

When a conversation or action completes, an extraction pipeline — typically LLM-driven — identifies what’s worth keeping. Raw text gets chunked semantically (with overlap to preserve cross-chunk context), converted to dense vector embeddings, and enriched with metadata: timestamps, importance scores, entities extracted via NER.

The extraction layer makes one of four decisions for each candidate:

- ADD — new fact, store it

- UPDATE — modify an existing record

- DELETE — remove outdated or contradicted information

- SKIP — duplicate or low-value, ignore

This prevents unbounded growth and the noise that comes with it.

2. Storage (The Persistence Layer)

A production memory store is typically a hybrid architecture:

Vector databases (Pinecone, Weaviate, ChromaDB, pgvector) handle semantic and episodic memory. HNSW indexes enable fast approximate nearest-neighbor search via cosine similarity or Euclidean distance. Choose based on your scale and whether you need managed infrastructure or tight database integration.

Knowledge graphs handle relational memory — entities, relationships, bi-temporal modeling (event time vs. system time) for complex multi-hop queries. Useful when you need to reason about how facts relate, not just retrieve similar ones.

Relational/key-value stores (PostgreSQL, Redis) handle structured state that requires ACID guarantees: session IDs, account state, workflow progress. Don’t use vectors for this.

Converged databases that unify all three in one engine are available but add operational complexity. Start with the simplest layer that works, then add.

3. Management (Consolidation, Decay, Forgetting)

This is the layer most implementations skip, and it’s where memory systems rot.

Summarization and compaction: Hierarchical map-reduce condenses long histories to manage token costs and context rot. Without this, retrieval quality degrades as the store grows.

Reflection: Self-critique loops run on stored episodes to extract higher-level insights. An agent that failed three times on the same task type should generate a structured reflection: what pattern caused the failure, what would prevent it. These reflections are stored back as semantic memory.

Consolidation and decay: Similar memories merge via cosine clustering (typical threshold: >0.85). A temporal decay function reduces importance scores for older memories: importance = relevance × exp(-λ × age). Stale or contradicted memories get pruned. Without decay, the store fills with outdated state that poisons retrieval.

Trust scoring: Memories from different sources get different confidence weights. A fact the agent observed directly is more reliable than one inferred from a single user message.

4. Retrieval (Read)

Before the LLM processes a new query, the memory layer runs a retrieval pass:

query → embedding → ANN search (top-k = 5–20)

↓

temporal filter

↓

graph traversal (multi-hop for relational queries)

↓

keyword search (BM25 hybrid)

↓

MMR re-ranking (diversity filter)

↓

inject into system prompt

Maximal Marginal Relevance (MMR) prevents the top-k results from being variations of the same memory. Retrieved items are re-ranked, summarized if needed, and injected into the system prompt with markers that ground the model’s response in factual history rather than hallucinated plausibility.

The full loop:

Observe → Remember → Act → Reflect → Update

On modern hardware this runs in well under a second for typical store sizes. Latency only becomes a concern above tens of millions of embeddings without proper indexing and quantization.

The Failure Modes to Watch

A few that hit production systems regularly:

Context window vs. retrieval mismatch. Short-term memory fills faster than expected, triggering premature summarization that loses details still needed. Tune buffer size and summarization thresholds against your actual usage patterns, not theoretical maximums.

Retrieval surface contamination. If the extraction pipeline is too permissive, low-signal memories dilute retrieval quality. An agent that stores every utterance will retrieve noise. Importance scoring at write time is not optional.

Stale memory poisoning. Without decay and consolidation, an agent will retrieve memories about a system’s state from months ago as if they’re current. Add timestamps, enforce TTLs for high-volatility facts, and run consolidation passes regularly.

Privacy and multi-tenancy. User-scoped memory stores require strict isolation. Row-level security, namespace partitioning, and GDPR-compliant deletion are non-negotiable for production deployments. Plan for this from the start — retrofitting isolation into a shared store is painful.

Evaluation gaps. Most teams measure agent task performance and notice memory failures only when things break. Add explicit memory benchmarks: precision@K for retrieval accuracy, coherence testing under session resets, and latency under load for the retrieval path.

What to Build First

If you’re adding memory to an existing agent, do it in layers:

Start with a conversation buffer + hybrid vector/relational store. Embedding retrieval and a simple summarization pipeline gets you 80% of the value with 20% of the complexity.

Add an extraction pipeline. LLM-driven extraction with ADD/UPDATE/DELETE/SKIP logic prevents store rot from day one.

Add reflection prompts on completed tasks. Even simple self-critique (“what worked, what didn’t”) fed back as semantic memory meaningfully improves subsequent performance.

Add decay and consolidation. Schedule this as a background job. It doesn’t need to run in the hot path.

Give the agent memory tools. Let it decide what to remember and forget. Agentic memory management is more accurate than purely automatic pipelines because the model has context about what actually mattered.

Where This Is Going

As of 2026, memory has moved from “nice to have” to a first-class architectural component in serious agent deployments. A few trends worth tracking:

Sleep-time computation. Agents that reorganize and consolidate memories during idle periods — offline, away from the hot path — are showing meaningful accuracy gains (18%+ in some published benchmarks) with lower runtime costs.

Multi-agent shared memory. Distributed stores with consensus mechanisms let agent swarms share episodic and semantic memory without duplicating state. The coordination overhead is real, but so is the gain for long-running multi-agent workflows.

Lifelong learning without catastrophic forgetting. This remains a hard problem. Continuous memory updates without forgetting earlier, still-valid knowledge requires careful consolidation strategy. No off-the-shelf solution solves this cleanly yet.

The next meaningful step is proactive, anticipatory memory — stores that surface relevant context before it’s requested, based on what the agent is likely to need next. This moves memory from retrieval infrastructure to something closer to genuine cognitive architecture.

Memory is what separates an expensive chatbot from an agent that actually improves over time. The infrastructure is mature and implementable today. The hard part is the design: what to store, when to forget, how to retrieve without adding noise. Get that right and everything downstream — reliability, personalization, task performance — improves with it.

Part of the AI Agents in Production series.